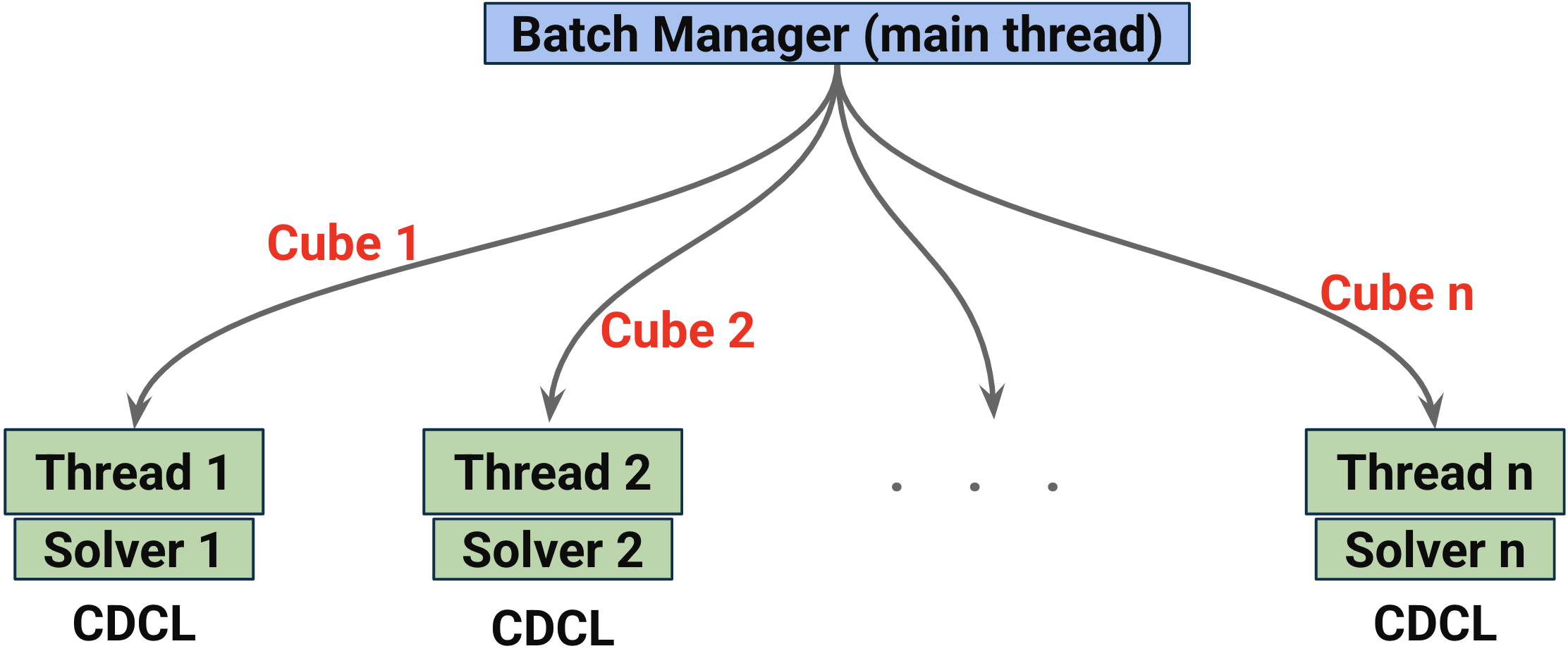

Parallelizing Z3: Adaptive Cubing via On-The-Fly Sampling of CDCL Conflict Traces (2025.07-present) [slides]

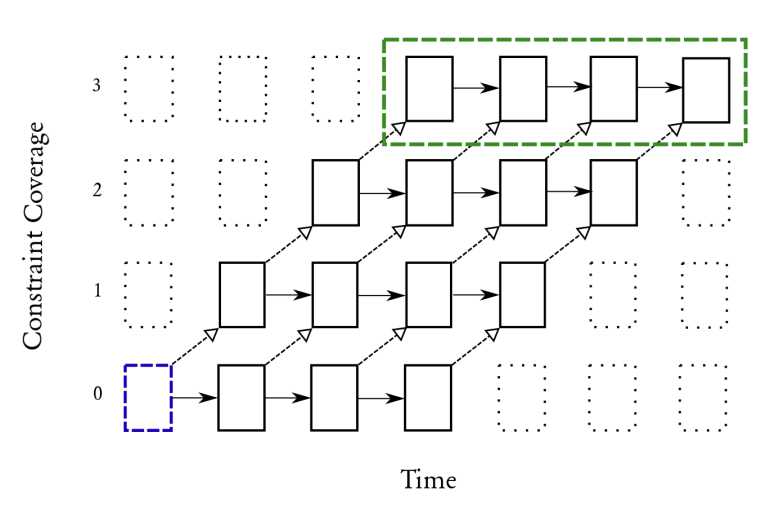

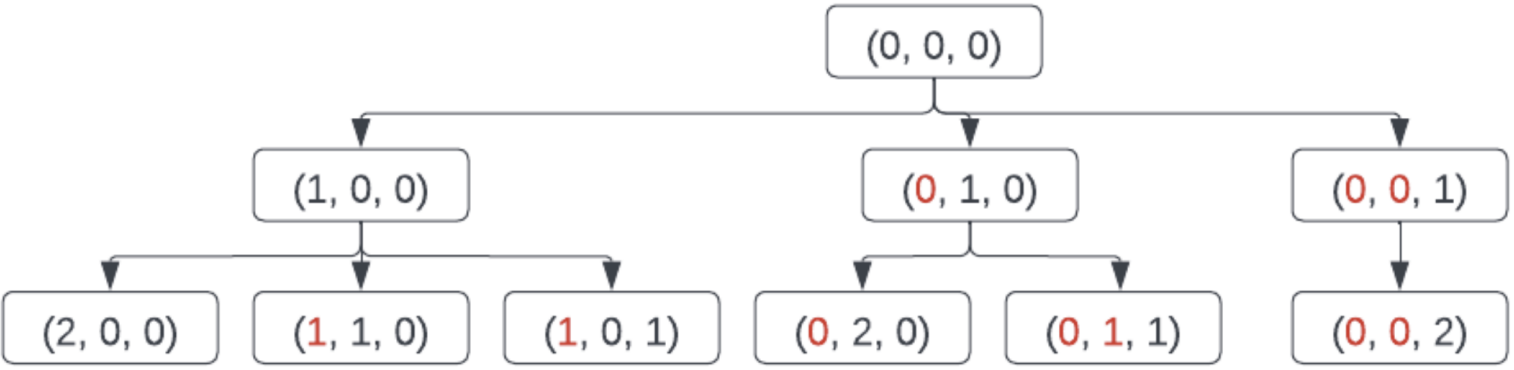

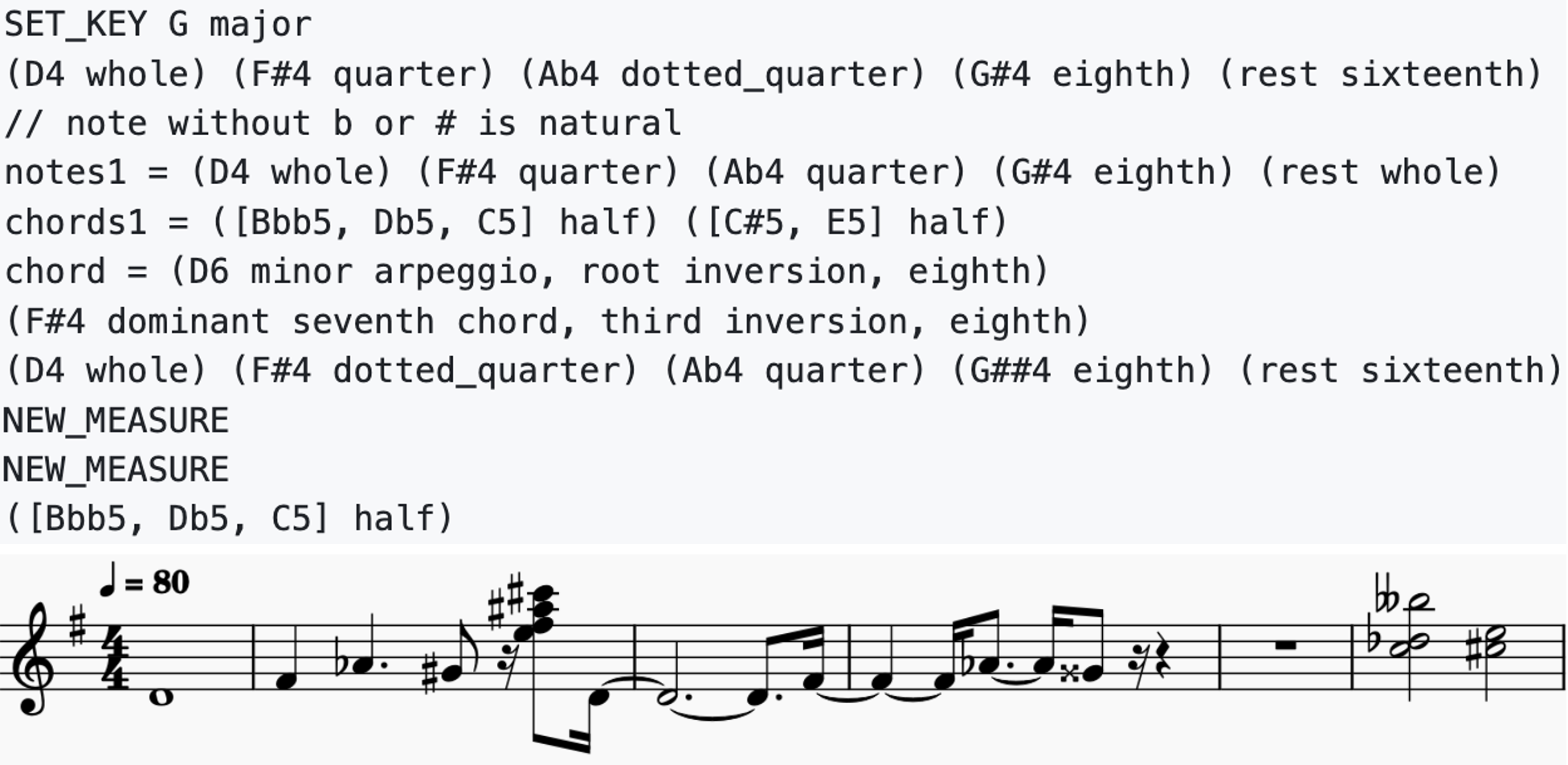

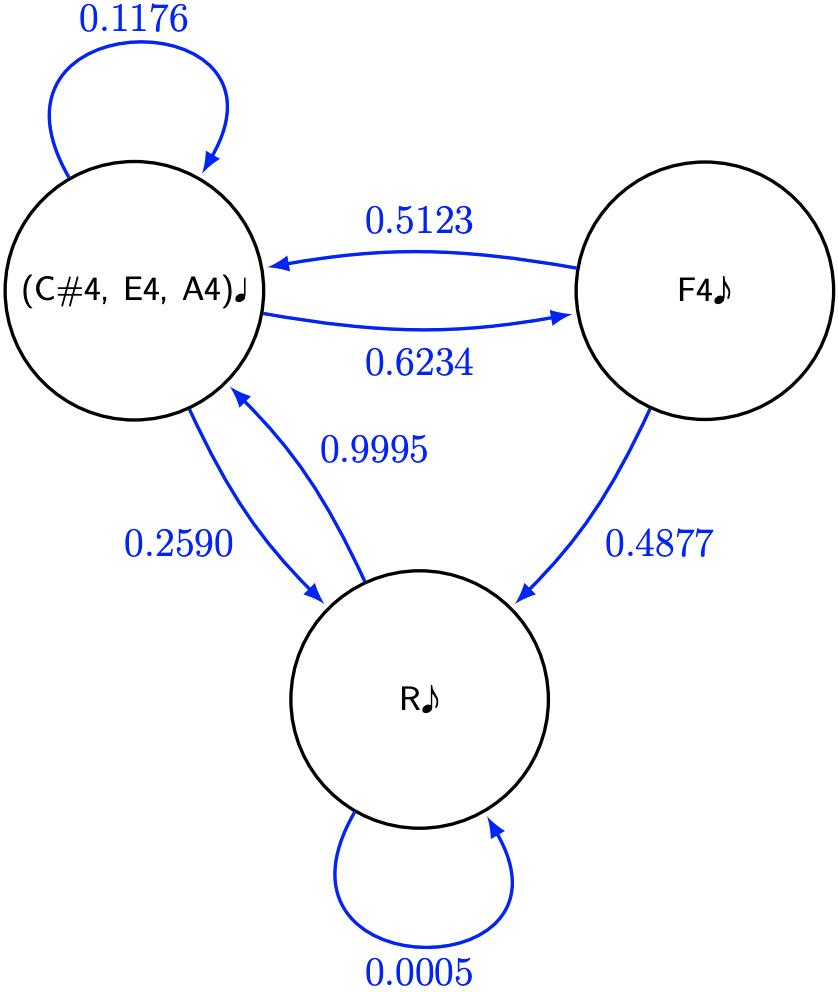

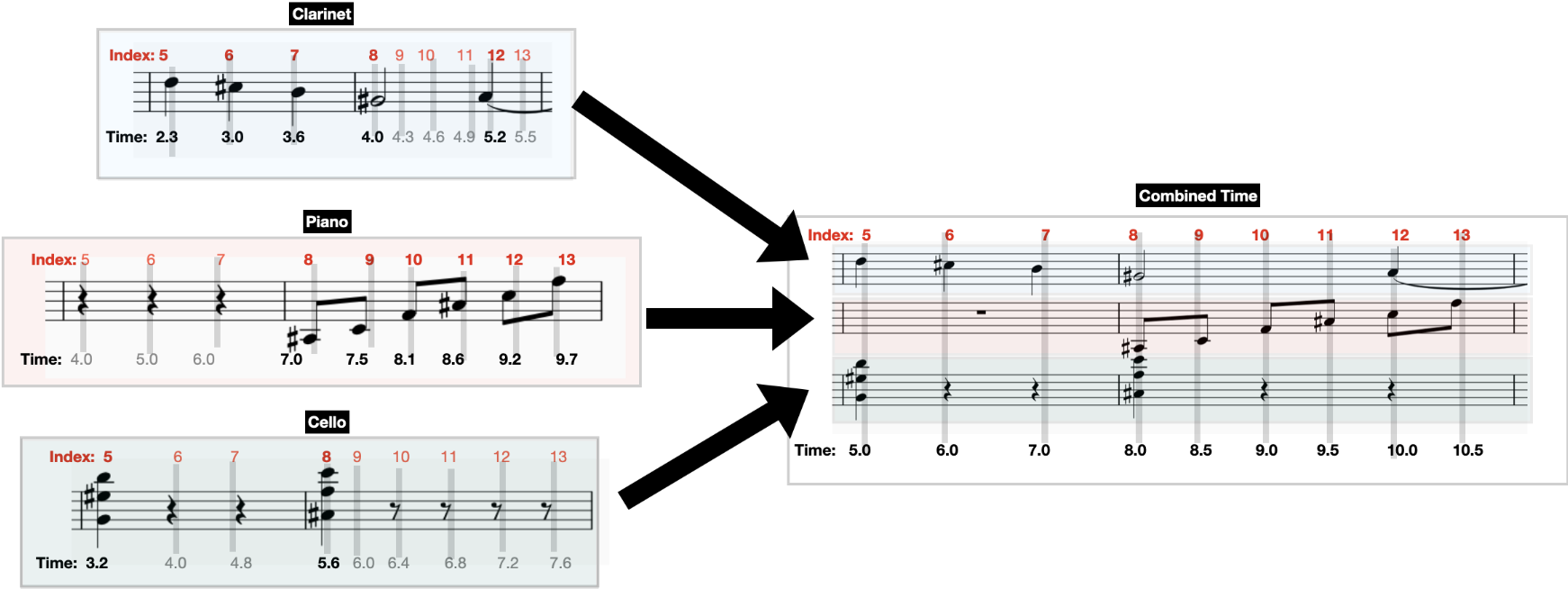

At Microsoft Research, I worked with Nikolaj Bjorner to design and implement a novel parallelization algorithm for the SMT solver Z3. We developed an online cube-and-conquer approach, where cubes are dynamically sampled during solving from CDCL conflict-variable heuristics. Cubes are distributed to worker threads based on similarity, allowing each thread to maximize reuse of its existing solver state. My contribution was merged into Z3’s master branch. See my fork for the experimental versions at the end of my internship, or the main repo for the merged code. We are continuing to improve our algorithm, exploring approaches such as online parameter tuning, search tree resolution optimizations, and backbone detection heuristics.